About AMP Lab Projects Downloads Publications People Links Project - Eigenflow Based Face Authentication |

|

|

|

|

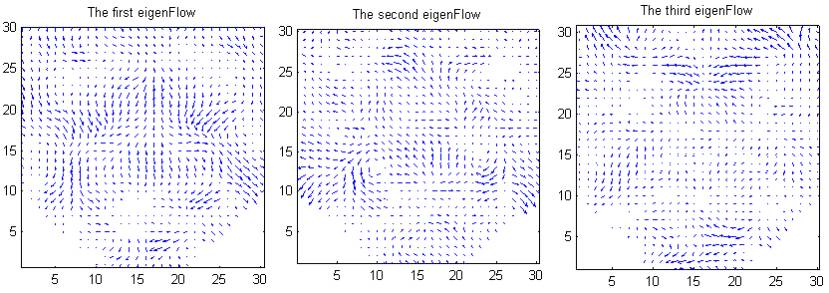

Train Eigenflow Using PCA

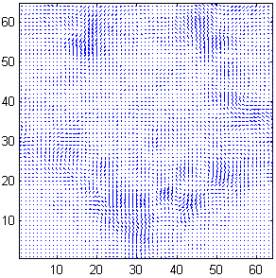

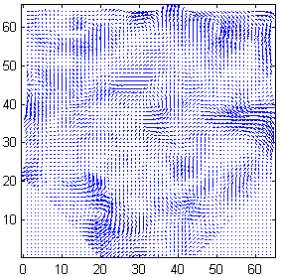

Optical

flow images between face images are trained using Principal Component

Analysis(PCA). This figure show the first three eigenflows trained

from expression images of one subject. Some prominent movement of

facial features, such as mouth corner, eyebrow, scale, nasolabial

furrow, can be seen from them.

The residue to this eigenflow space can be useful for authentication.

-

Linear Discriminant Analysis

Finally the eigenflow residue, combined with the optical flow residue using linear discriminant analysis ( LDA ), determines the authenticity of the test image.

We have do experiments using this approach based on our own database and public databases. Experimental results show that the proposed scheme outperforms the traditional methods in the presence of facial expression variations and registration error.

| Download |

As the first step of this research, we collected an face database with expression variations, which is available to the public. In this data set, we have:

-

13 subjects .

-

Each subject has 75 images showing different expressions.

These face images are collected in the same lighting condition using CCD camera. Face images have been well-registrated by the eyes location. The following example shows some expression images of one subject.

Download our face expression database now.

| Our work is used by... |

-

Institute for Information Industry (III), Taiwan http://www.iii.org.tw/

-

Mediasite http://www.mediasite.com/

| Publications |

- Xiaoming Liu, Tsuhan Chen and B. V. K. Vijaya Kumar, "Robust Face Authentication for Multiple Subjects Using Eigenflow", Carnegie Mellon Technical Report: AMP01-05

- Xiaoming Liu, Tsuhan Chen and B.V.K. Vijaya Kumar, Face Authentication for Multiple Subjects Using Eigenflow. Pattern Recognition, special issue on Biometric,Volume 36, Issue 2, February 2003, pp. 313-328.

- Xiaoming Liu, Tsuhan Chen and B.V.K. Vijaya Kumar, On Modeling Variations For Face Authentication, In the Proceeding of the International Conference on Automatic Face and Gesture Recognition 2002, pp. 369-374, 20-21 May 2002.

Any suggestions or comments are welcome. Please send them to Xiaoming Liu.

|